How to integrate?

Prerequisites

Define UpTrain Function to run Evaluations

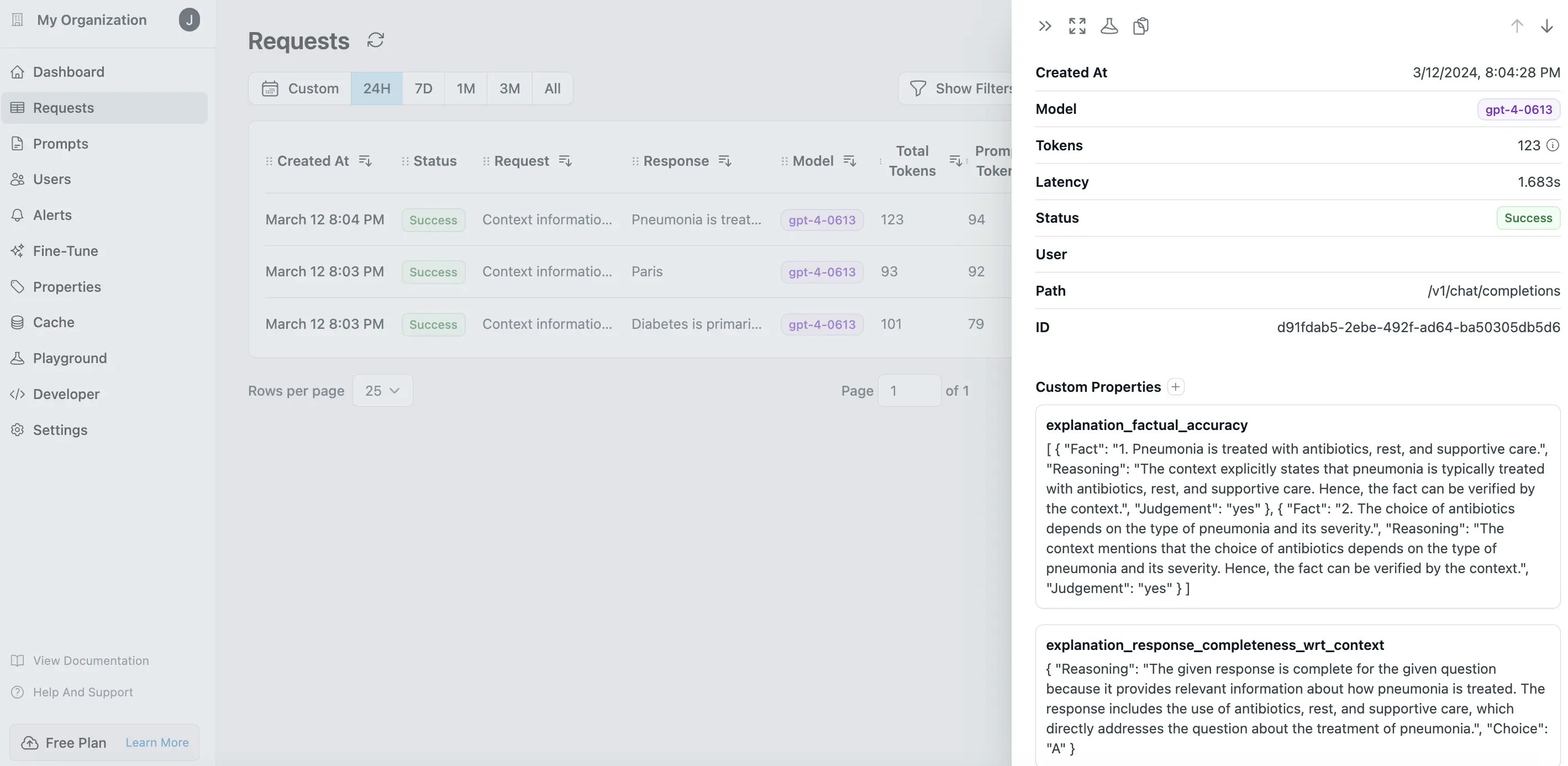

- Response Conciseness: Evaluates how concise the generated response is or if it has any additional irrelevant information for the question asked.

- Factual Accuracy: Evaluates whether the response generated is factually correct and grounded by the provided context.

- Context Utilization: Evaluates how complete the generated response is for the question specified given the information provided in the context. Also known as Reponse Completeness wrt context

- Response Relevance: Evaluates how relevant the generated response was to the question specified.

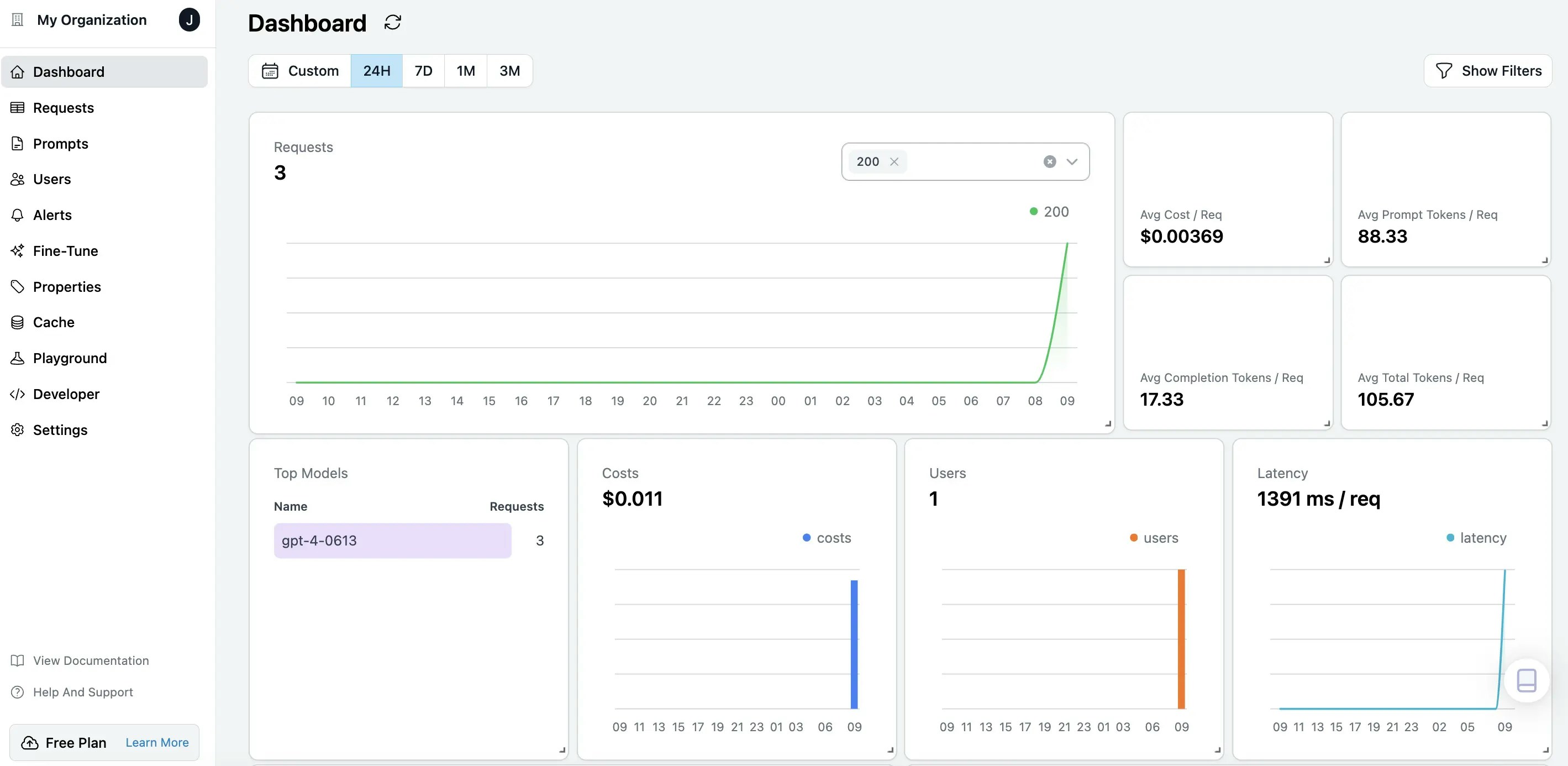

Visualize Results in Helicone Dashboards

You can log into Helicone Dashoards to observe your LLM applications over cost, tokens, latency

Tutorial

Open this tutorial in GitHub

Have Questions?

Join our community for any questions or requests