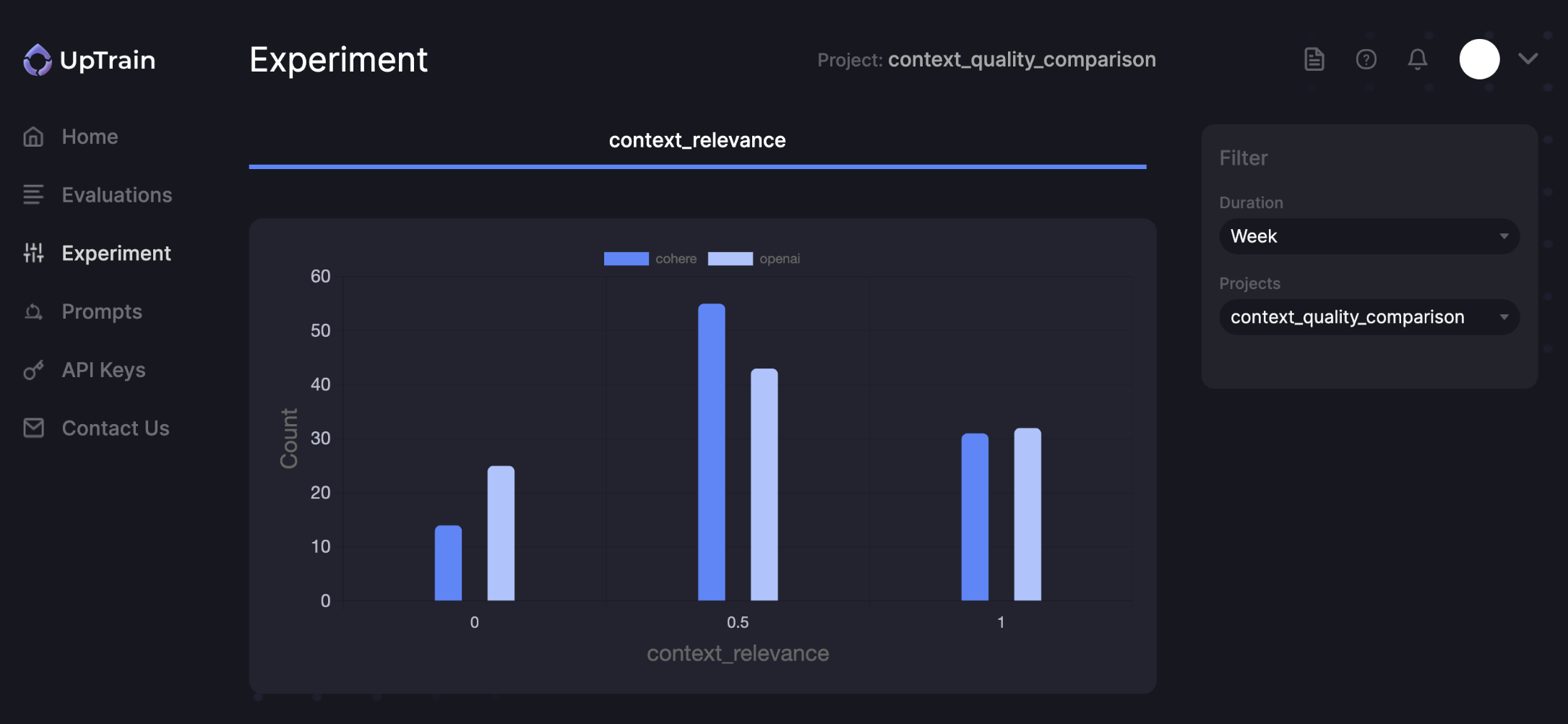

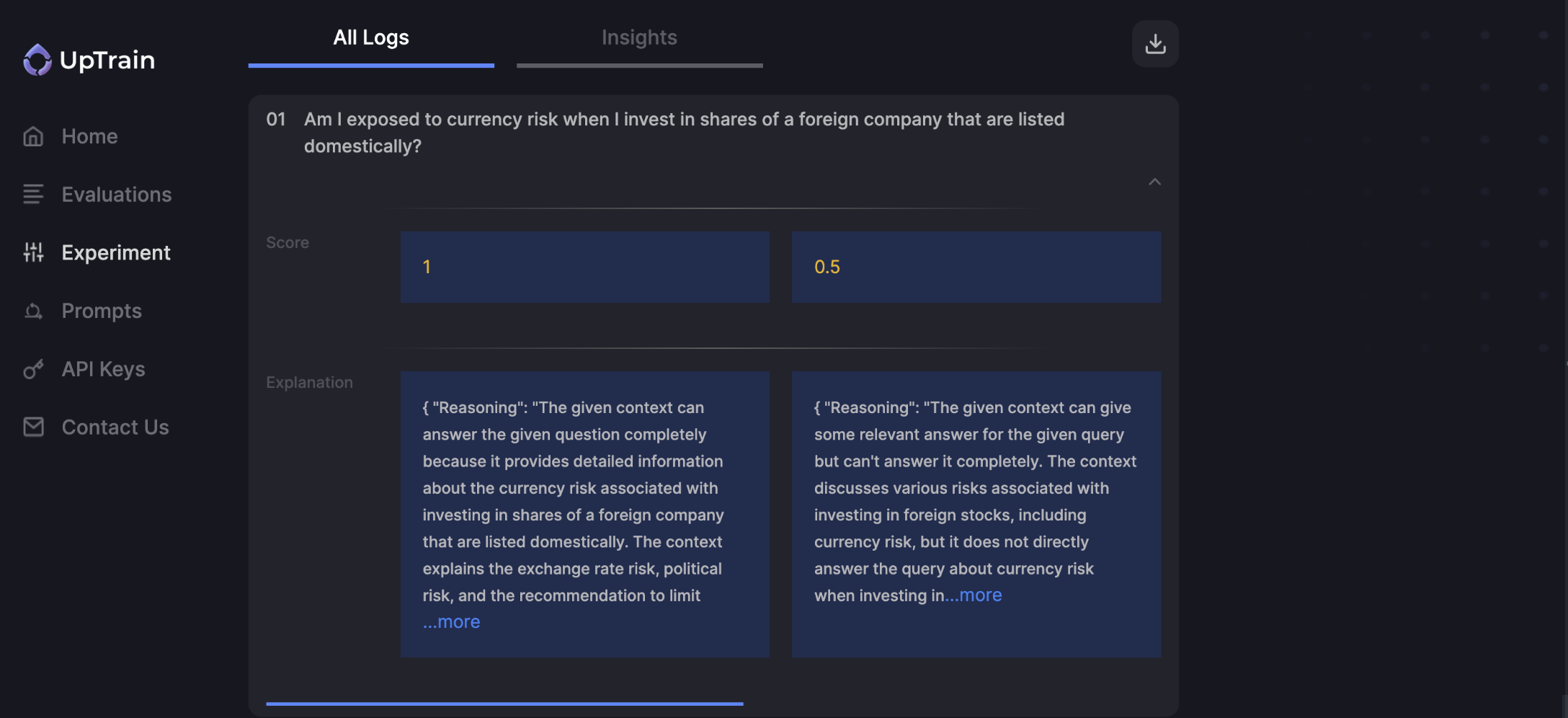

What are experiments?

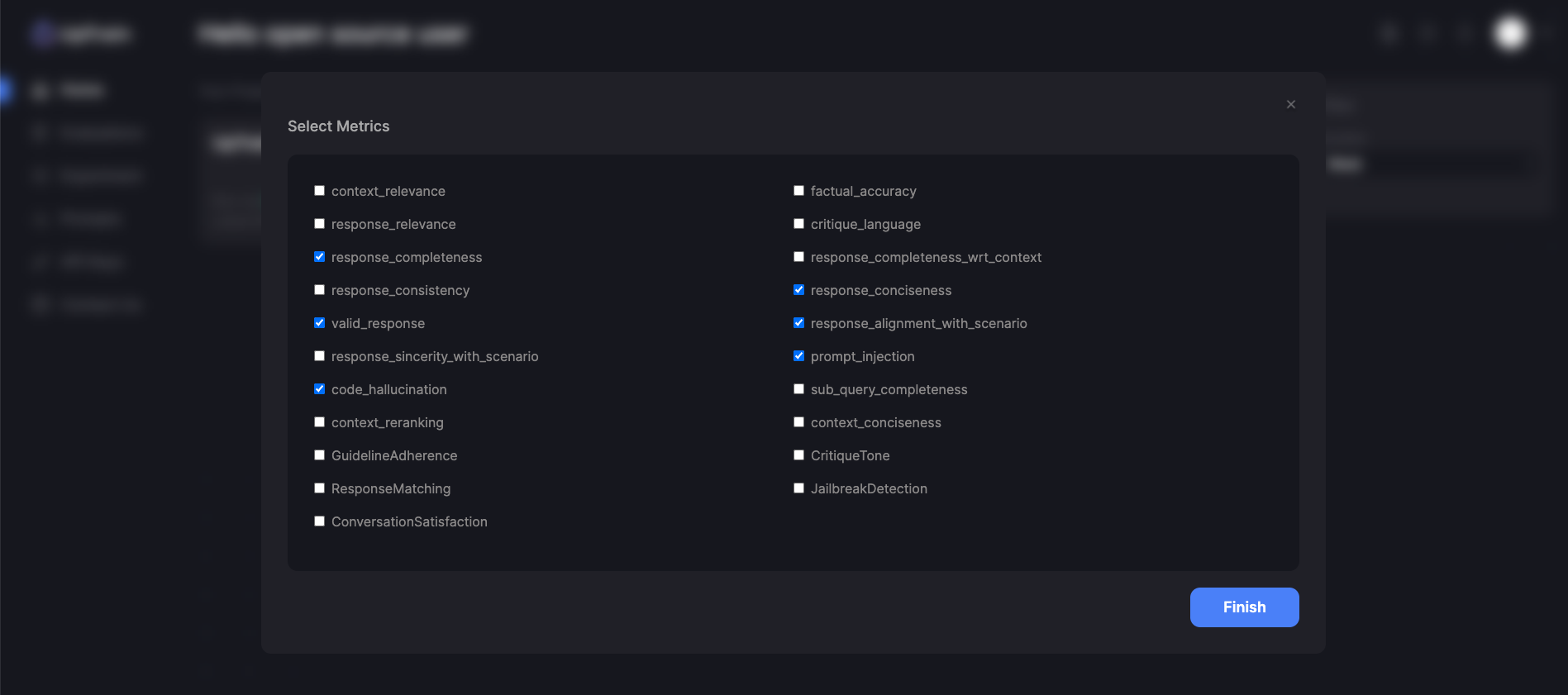

You can experiment with UpTrain on 20+ pre-configured evaluation metrics like:- Context Relevance: Evaluates how relevant the retrieved context is to the question specified.

- Factual Accuracy: Evaluates whether the response generated is factually correct and grounded by the provided context.

- Response Completeness: Evaluates whether the response has answered all the aspects of the question specified

How does it work?

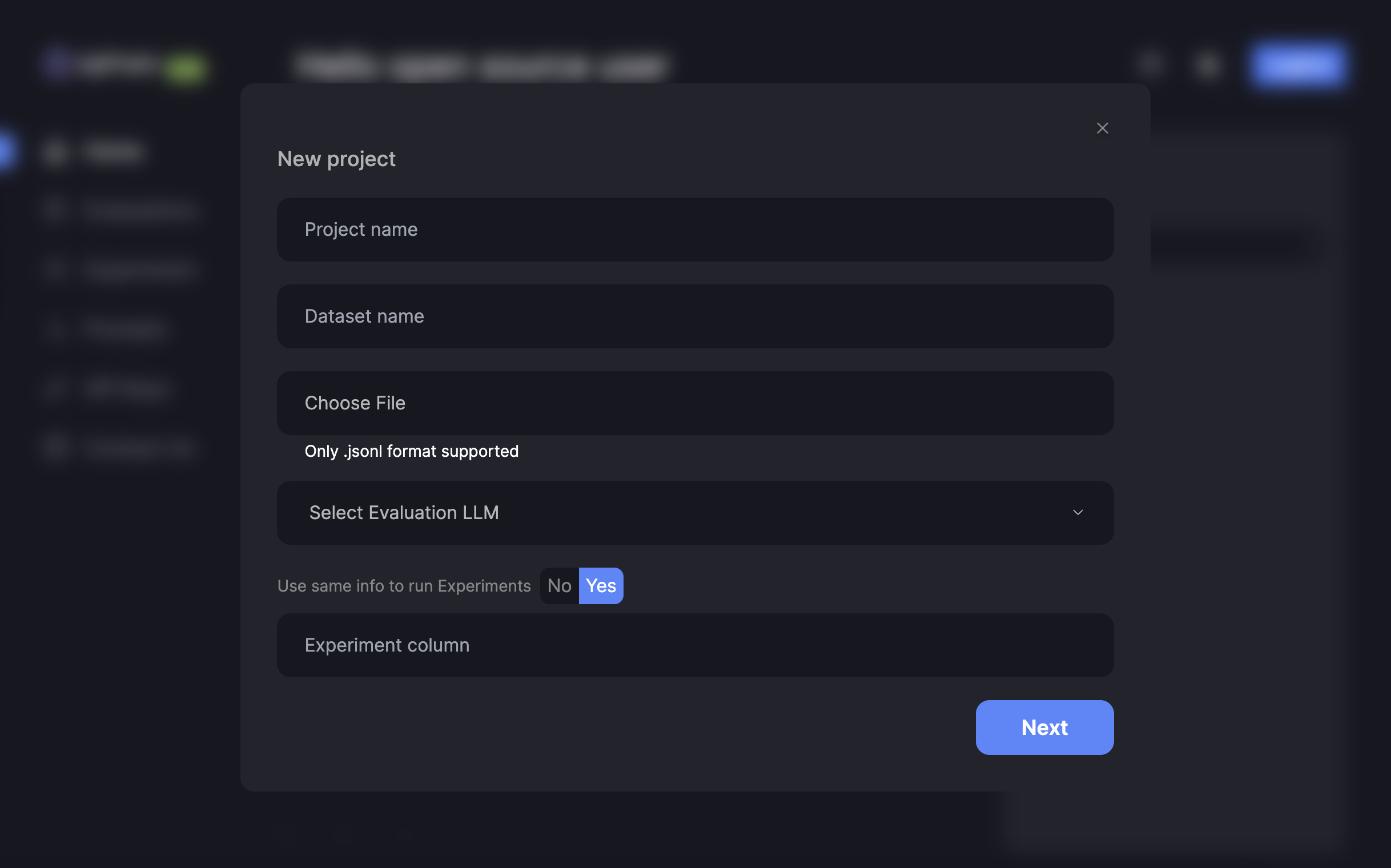

Enter Project Information

Project name:Create a name for your projectDataset name:Create a name for your datasetChoose File:Upload your Dataset Sample Dataset:Select Evaluation LLM:Select an LLM to run evaluationsUse same info to run Evaluations: Yes If you do not wish to run experiments, you can selectNoand follow the steps hereExperiment column:Enter the column to run experiments on

UpTrain Dashboard is currently in Beta version. We would love your feedback to improve it.

Have Questions?

Join our community for any questions or requests